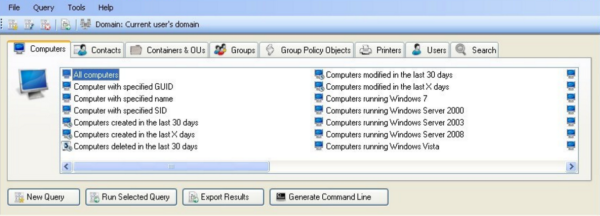

Deploying Kaseya VSA agents to provisioned workstations and servers from a standard image can be a challenge. Using the typical methods result in duplicate GUID's due to the nature of the technology making it impossible to accurately monitor and report on these types of machines. The following procedure has been tried a tested (successfully) numerous times on Citrix... Continue Reading →

Intel, Cloud Telemetry and Jevons Paradox

I recently heard on a tech podcast that Intel were contributing to the open source community via their open telemetry framework called 'Snap'. Snap is designed to collect, process an publish system data through a single API. Here's a quick diagram along with the project goals taken form the Github page that will provide you... Continue Reading →

Seven Deadly… Tools.

I have tried, tested and benefited from a plethora of nifty tools over the years. Some good, some bad... and some, well just down right ugly!